Overview

I had the opportunity to run a local LLM using mdx.jp’s 1GPU pack and Ollama, so this is a memo of the process.

https://mdx.jp/mdx1/p/guide/charge

References

I referred to the following article.

https://highreso.jp/edgehub/machinelearning/ollamainference.html

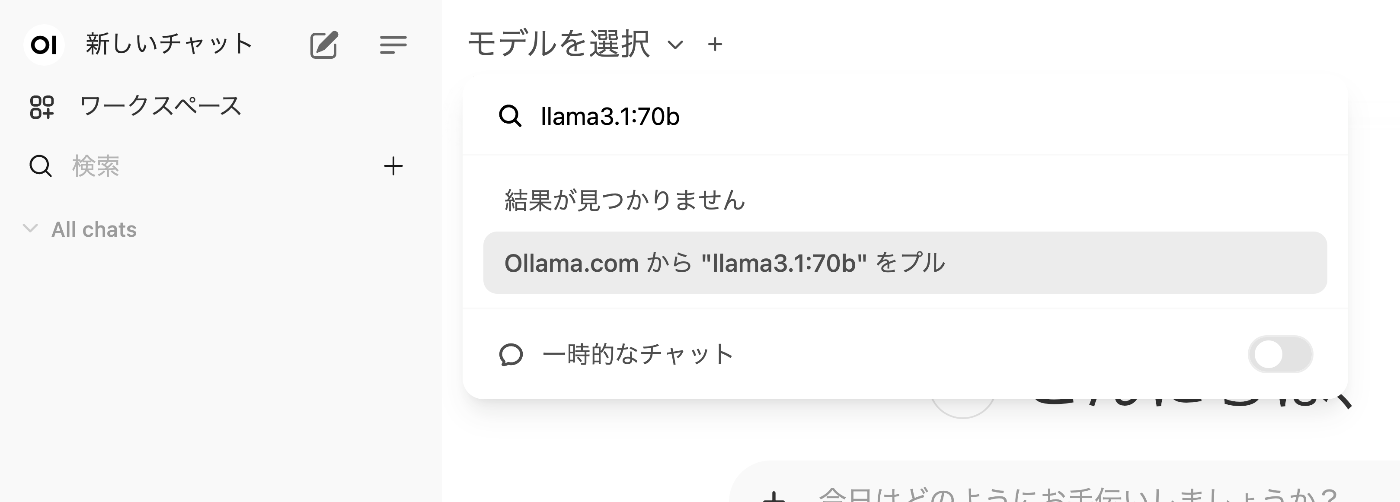

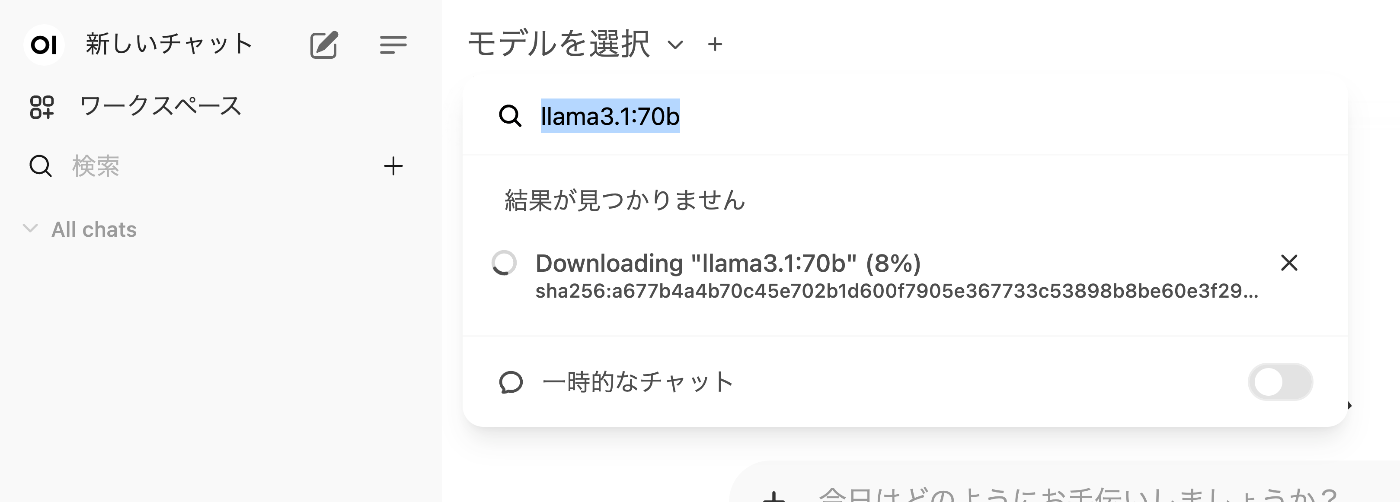

Downloading the Model

Here, we target llama3.1:70b.

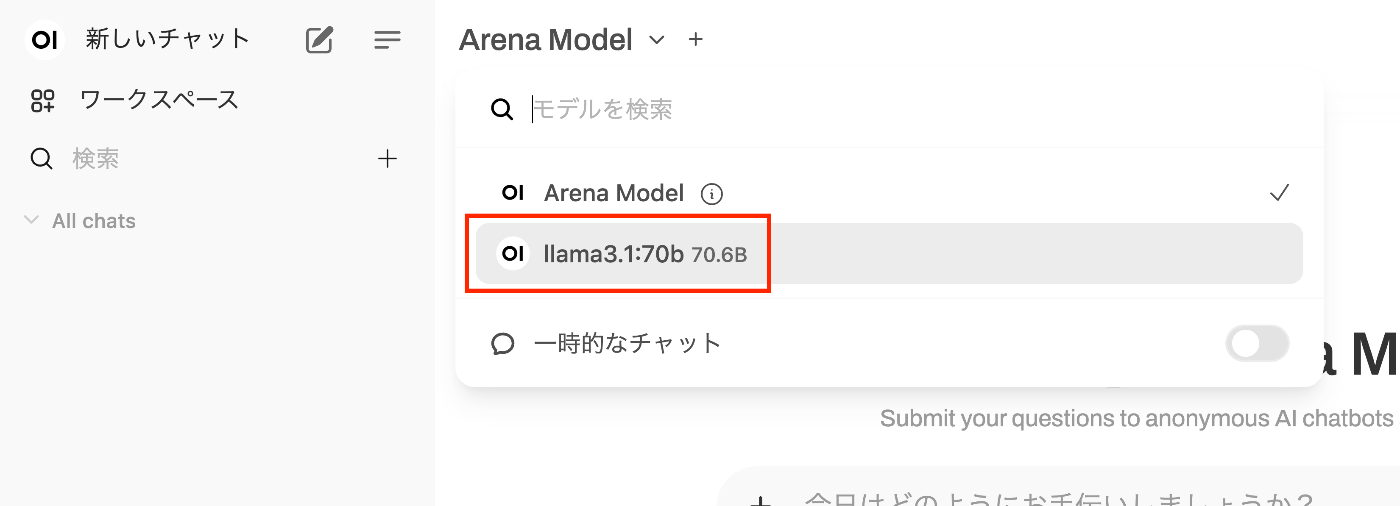

After the download is complete, it becomes selectable as shown below.

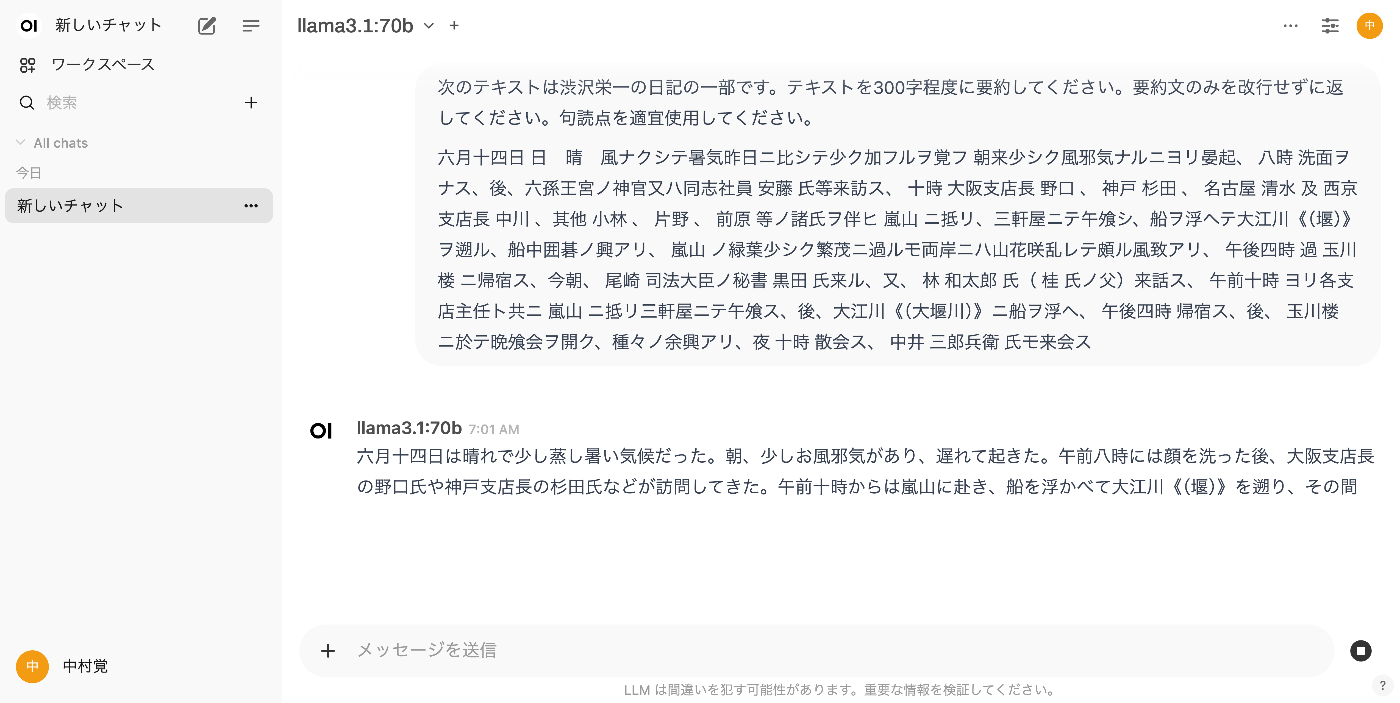

Usage Example

We use the following “Shibusawa Eiichi Biographical Materials.”

https://github.com/shibusawa-dlab/lab1

Using the API

Documentation was found at the following location.

https://docs.openwebui.com/api/

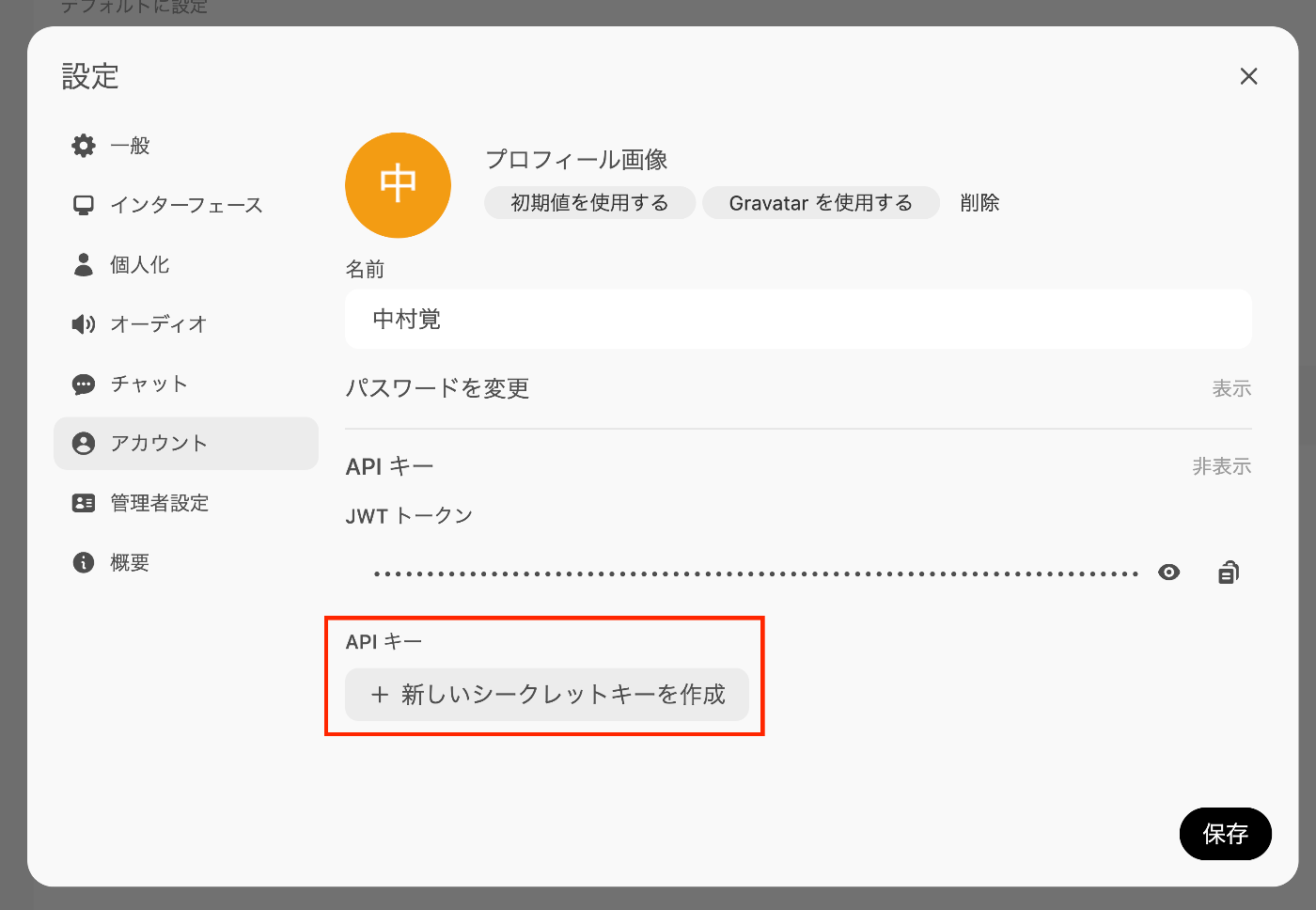

Issue an API key separately from the JWT token as shown below.

Here is an execution example.

As a result, the following was obtained. However, it took nearly 60 seconds for the result to be returned.

June 14th was sunny with no wind and it was hot. I had a slight cold in the morning, but washed my face at 8 o’clock, after which officials from the Rokusonno Shrine and Mr. Ando from Doshisha visited. Then, together with Osaka branch manager Mr. Noguchi, Kobe and Nagoya branch managers and others, we went to Arashiyama, had lunch at Sangenya, and rowed a boat up the Oi River. We enjoyed playing Go on the boat. The green foliage at Arashiyama was slightly sparse, but mountain flowers bloomed in profusion on both banks, making for beautiful scenery. We returned to Tamagawa-ro after 4 PM. Secretary Kuroda of Justice Minister Ozaki and Mr. Hayashi Wataro (father of Mr. Katsura) came to visit. From 10 AM, together with branch managers, we went to Arashiyama, had lunch at Sangenya, and rowed a boat on the Oi River. We returned at 4 PM, then held a dinner party at Tamagawa-ro with various entertainments. The gathering ended at 10 PM, with Mr. Nakai Sarobei also attending.

When changing the model to llama3.1:latest (8.0B), the result was returned in about 6 seconds.

(The 8B model output was less natural in Japanese compared to the 70B model.)

While it takes more time, the llama3.1:70b (70.6B) model seems to produce more natural Japanese.

Summary

I ran a local LLM using mdx.jp’s 1GPU pack and Ollama.

I hope this serves as a helpful reference for using local LLMs.