Previously, I built an inference app using Hugging Face Spaces and a YOLOv5 model (trained on the NDL-DocL dataset):

This time, I modified the app above to add JSON output, as shown in the following diff:

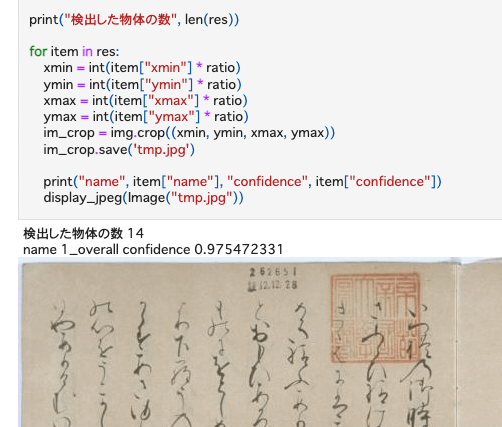

This enables processing using the returned results, as shown in the following notebook:

https://github.com/nakamura196/ndl_ocr/blob/main/GradioのAPIを用いた物体検出例.ipynb

There may be better approaches, but I hope this serves as a useful reference.